While many developers use artificial intelligence to streamline backend workflows, Studio Atelico is weaving it directly into the player’s hands.

From the personalized creature generation in Bobium Brawlers to the technical architecture of the Atelico AI Engine, we sat down with Piero Molino to discuss a new frontier in game design.

Studio Atelico describes itself as an “AI-first” studio. What does that actually change in your development process and decision-making compared to a traditional studio?

Making games is an artistic endeavor, requiring humans at every stage of the process, because human judgment and taste are what make them special. That said, we are firm believers in the power of Generative AI and “AI-first,” which, for us, means three very different things at the same time.

First of all, our games are “AI-first”, meaning that they adopt AI, specifically generative AI, during gameplay. This materializes in personalized procedural content generation in our first game Bobium Brawlers, but will also mean language-based ways of interacting with the games, adapting NPCs and dialogue, and in general, new AI-based mechanics. This greatly impacts how we design our games.

Second, for us being “AI-first” means that we are also building the AI technology that enables those games. There are no solutions on the market to make gen AI in games effective, meaning it does not bankrupt the developer if the game is successful and provides developers with the tools and control they need, so we are building our own, focusing on running AI locally on the player’s device.

Third, we are “AI-first” in the adoption of AI technology for game development, in particular, coding assistants that help our productivity substantially. While we certainly utilize AI in our daily workflows, we are way more interested in how AI can be used to offer a unique and compelling experience to the player.

How does generative AI function in your gameplay systems today, is it enhancing traditional mechanics, or enabling entirely new kinds of player interaction?

In Bobium Brawlers, AI does a bit of both.

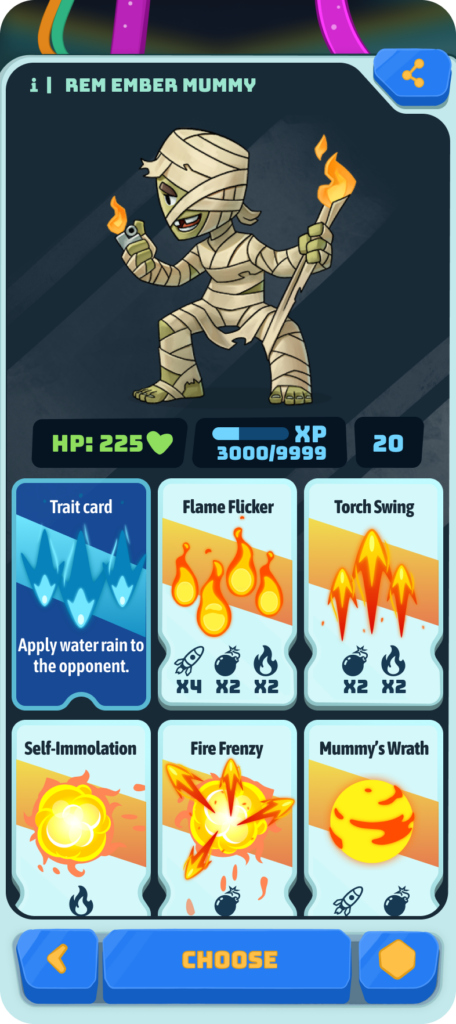

It fosters a very personal connection to the game for the player by allowing them to create any creature that comes to their mind by just describing it.

Then, our tech creates an image of the creature and a thematic deck of cards that conforms to a ruleset we have crafted. The resulting experience is something that feels both familiar in terms of having gameplay that is easy to understand and internalize, but also brand new in that you have never been able to do something like this in a game before.

We have ideas for other fun things the tech can bring to the playing experience, like a battle summary that changes depending on the date, weather, or a player’s mood, for example.

What are the hardest design challenges when building systems powered by generative AI?

It’s been a really fun challenge for us! A key part of this has been to design a framework in which the game’s rules are clearly defined and then to leave enough room for the LLM to shine. LLMs are non-deterministic, so we want to lean into the sometimes wacky outcomes without breaking the game rules.

Before settling on this game, we built many prototypes, and discovered they were sitting on a spectrum from the more clockwork designs where game rules were prominent, to the more free form designs where AI was prominent. The most fun prototypes were the ones somewhere in the middle of the spectrum where both hand-designed mechanics and AI stochasticity played a role, resulting in something better than the single parts.

Can you walk us through the Atelico AI Engine, and whether you see it purely as an internal tool or as something that could evolve into a broader platform?

We absolutely envision the Atelico AI Engine being useful to any developer looking to leverage Generative AI into compelling interactive experiences. Our current plan is to build out the main functionality of the engine while building Bobium Brawlers and then to release a “v1” of the engine for other developers to use.

Ahead of that, we are actually already working with some other studios using our engine in their games, which has allowed us to start polishing the engine and make it more robust for when it will be widely available.

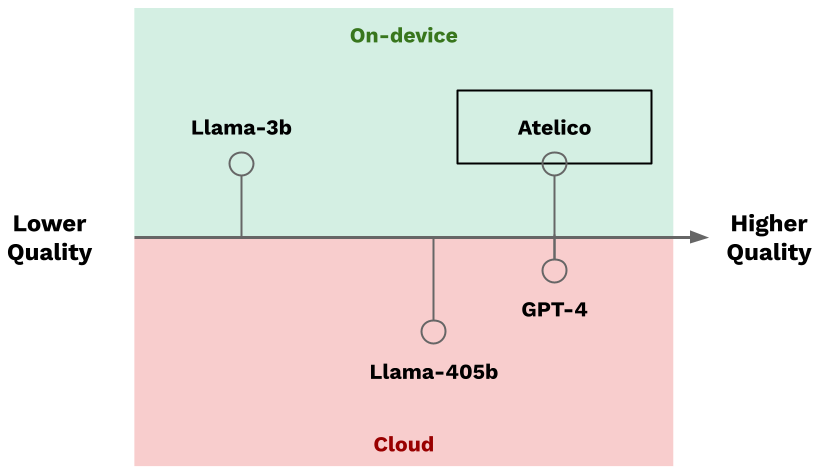

But, to give a high-level overview of the engine: Atelico AI Engine is a high-performance, on-device inference platform that enables large language models and image generation to run directly on players’ devices, with no cloud required. It is designed as an SDK that developers can embed into their games or run locally.

Think what Havok is for physics, but for AI. The engine supports modern open-weight foundation models along with highly optimized, quantized variants we created that run efficiently on consumer hardware. It is optimized across Metal for Apple Silicon, CUDA for NVIDIA GPUs, and CPU, allowing it to scale from high-end gaming PCs down to lower-spec mobile devices while maintaining strong performance.

In addition to text and image generation, Atelico supports embeddings and classification. This enables advanced gameplay systems such as personalized NPC behavior, dynamic dialogue, and adaptive world-building.

A key differentiator is that everything runs locally. This removes network latency, eliminates per-request costs, and ensures that player data never leaves the device. Atelico also incorporates proprietary optimizations that deliver order-of-magnitude efficiency gains compared to conventional inference systems, making real-time on-device AI practical at game scale.

The engine was built specifically to overcome the limitations of cloud-based AI in games, giving developers full control over performance, cost, and the overall player experience without relying on external API providers.

When using generative AI in your art pipeline, how do you ensure a consistent art direction and preserve a cohesive visual identity across the game?

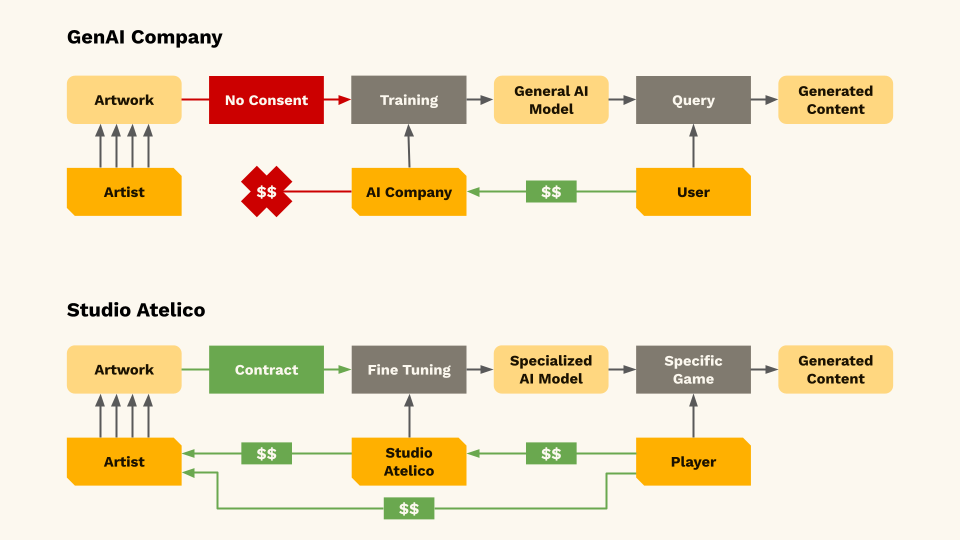

This question gets to the heart of who we are as a company. We are not interested in the use of our tech to replace artists or save time/money on game development. We believe that humans come first and have worked hard to help protect artists in these sometimes uncertain times.

For example, we recently developed ARC, which is a draft legal agreement for artists to use when working with studios. It’s meant to protect the artist’s work, establish clear guidelines about what is and isn’t permissible with their art, and to also offer them a share in the upside should their art be used to train models.

For Bobium Brawlers, we worked extensively with our art team to train our ImageGen model on their art style. The result is a model that generates images that are consistent and fit within the vibe of the game and the lore.

Other companies use similar techniques for their internal asset generation, but we don’t, and use this exclusively for in-game creature generation. The reason is that we want to use AI for things that were not possible before, in this case, generating in a few seconds the portrait of a creature the player just made up was not possible at all, specifically at the scale of millions of players.

You’re effectively turning generative AI into a form of player-driven content creation in Bobium Brawlers. Where do you see the line between UGC and AI-generated content blurring, and what does that unlock for game design?

There have been two major innovations in game content over the last 20 years: UGC and PGC. Procedural generation is actually much older, going back at least to Elite, but it really became central with the rise of roguelikes and roguelites, with games like Spelunky. These systems generate a lot of replayability, but the player has little direct control over the content itself.

On the other side, you have UGC, which really took off with Minecraft. That shifted games from something you just play into something you also create. But creation tools can be time-consuming and require a level of effort that not every player wants to invest.

What AI enables is a convergence of the two. You can have procedurally generated content that is shaped and directed by the player, or user-generated content that is assisted by AI to make creation faster and more accessible. At that point, the distinction between UGC and PGC starts to blur.

In Bobium Brawlers, for example, players can create highly personalized creatures just by describing them. That’s a form of UGC, but it’s also a kind of personalized PCG. It sits right at the intersection.

This shift points toward a future where games are far more customized and personal. Two players could have dramatically different experiences in the same game. For designers, that unlocks entirely new ways to surprise and engage players.

We think this will evolve similarly to how physics entered games. Early on, it showed up in simple, novelty-driven ways like ragdoll effects. Then it became foundational to gameplay in titles like Half-Life 2, and eventually enabled entirely new experiences like Portal. With AI in games, we’re still in that early, experimental phase. It’s already compelling, but it’s clear there’s much more to come.

What has player feedback on Bobium Brawlers taught you about how gamers actually engage with AI-driven systems versus how you expected them to?

We just went Alpha with a small cohort of players, so it’s still early days, but players have really loved the creature creation.

We think we still have some short- to medium-term UI/UX challenges to solve, though. For example, we think the AI chatbot box may not always be the best way to get player input, and we are experimenting with other ways to transform players’ ideas into creation.

We also discovered that players are at the same time more lenient towards AI-generated content and are also more demanding. On one hand, if they realize their request was a bit too far out there, they appreciate it if the AI generator made an honest attempt.

On the other hand, we see players also fixating on creating many creatures until they get it exactly the way they want it. Because of this some players become very attached to their creations.

What kinds of game genres or experiences do you believe will only exist because of AI?

We think there are many new kinds of experiences ahead of us. It’s still early, so it’s hard to fully map them out, but we tend to think about it in three buckets.

The first is what you might call the “sprinkle on top” category. These are games that could exist without generative AI, but are meaningfully enhanced by it. For example, a strategy game where you can negotiate with opponents through natural language, or a fighting game where the character creator becomes conversational instead of menu-driven.

The second category is games with generative AI at the core. A good mental model is a future version of The Sims, where you can actually talk to characters, understand their internal reasoning, and interact with systems that go far beyond predefined behaviors. Here, AI is not just an enhancement, it is the game.

The third category is the most interesting one. These are games that we can’t really predict yet, because they will emerge as developers become fluent with the tools and start exploring their edges. You wouldn’t have imagined something like World of Goo before physics engines, or Wolfenstein 3D before real-time rendering made it possible.

This is where things get truly exciting. As designers start working with personalization, language as a core interface, and effectively infinite content generation, we’ll see entirely new genres emerge. And like past shifts in game technology, many of them will feel obvious only in retrospect.

What’s the biggest risk that could cause AI-first games or studios to fail?

Video games are already a difficult business. Any studio faces structural challenges like discoverability, competition for attention, and rising development costs. AI-first studios inherit all of that, and then add a few new headwinds on top.

One of the biggest is player sentiment. There is a real and understandable skepticism toward AI among many gamers. We share the desire to avoid low-quality, “slop-like” experiences, and we think those concerns are valid. It’s important that the industry has thoughtful conversations about how this technology is used and where boundaries should be. We’ve always maintained there is a right, ethical way to build with AI, and we try to hold ourselves to that standard.

The second challenge is technical and economic. A lot of current generative AI relies on cloud infrastructure with cost structures that don’t map well to games at scale. If every player interaction incurs a marginal cost, it becomes very hard to design sustainable systems.

That’s one of the reasons we’ve focused on on-device inference. By running everything locally, developers can use AI without per-request costs, while also improving latency and preserving player privacy.

Overall, we’re realistic about the risks, but optimistic. If the industry approaches this thoughtfully, we think it’s possible to address both the perception challenges and the underlying technical constraints, and bring both players and developers along.

Image Source: Studio Atelico

Looking ahead to 2026 and beyond, do you see AI-native studios fundamentally outperforming traditional studios, or will the industry settle into a hybrid model?

We don’t think it’s controversial to say that there will be hit games that use generative AI in some way, both in development and in gameplay. There will also be hit games that don’t. There’s plenty of room for both models to succeed.

What is changing, though, is how games are made. More broadly, the way we build software is shifting quite dramatically, and game development is already moving in that direction as well. AI is becoming part of the toolkit, whether studios position themselves as “AI-native” or not.

So rather than a clean split, we expect a hybrid model to emerge. Some studios will lean heavily into AI, others will use it more selectively, but most will adopt it where it makes sense.

At the same time, games are not just software. They are artistic, cultural, and entertainment products. There will never be a substitute for human taste, judgment, and creativity. AI can expand what’s possible, but it won’t replace the role of people in deciding what is actually worth making.

CEO and Co-founder of Studio Atelico